When buyers ask AI “best [your category],” your brand should be in the answer.

AI splits every prompt into dozens of sub-queries per model. We measure where ChatGPT, Perplexity, Google AI Overviews, and Gemini cite you — then close the gaps with citation-grade press on 1,500+ vetted publishers.

Scan delivered by Lectern, our AI visibility agent. Free. No credit card.

Trusted by 2,000+ teams building AI-search visibility

Press that's measurable, citable, and compounding

Visibility and sentiment, query by query

Track which sub-queries each model actually runs, where competitors win, and how AI describes you when you do appear — 'enterprise only,' 'too expensive,' or recommended after a competitor. Snapshot-to-snapshot reporting shows which placements moved both share-of-voice and sentiment, so press becomes a pipeline number, not a vanity hit.

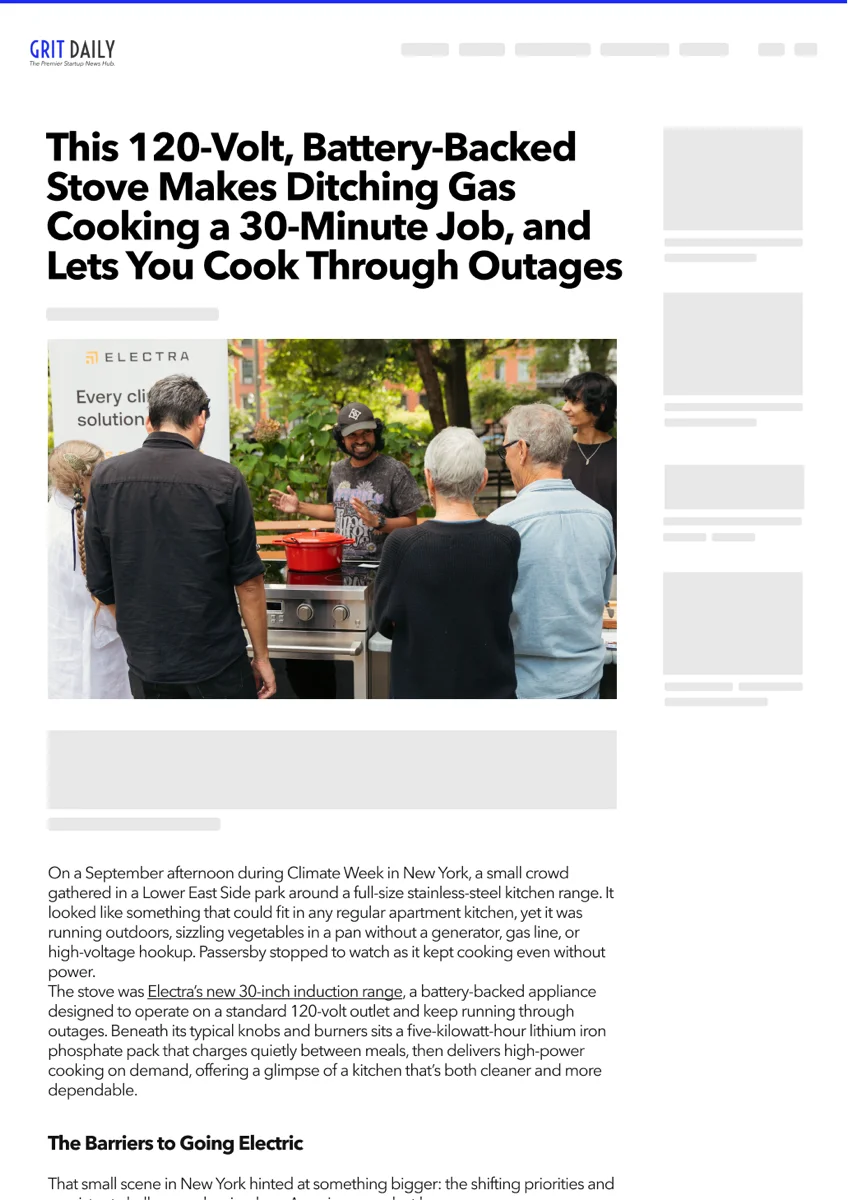

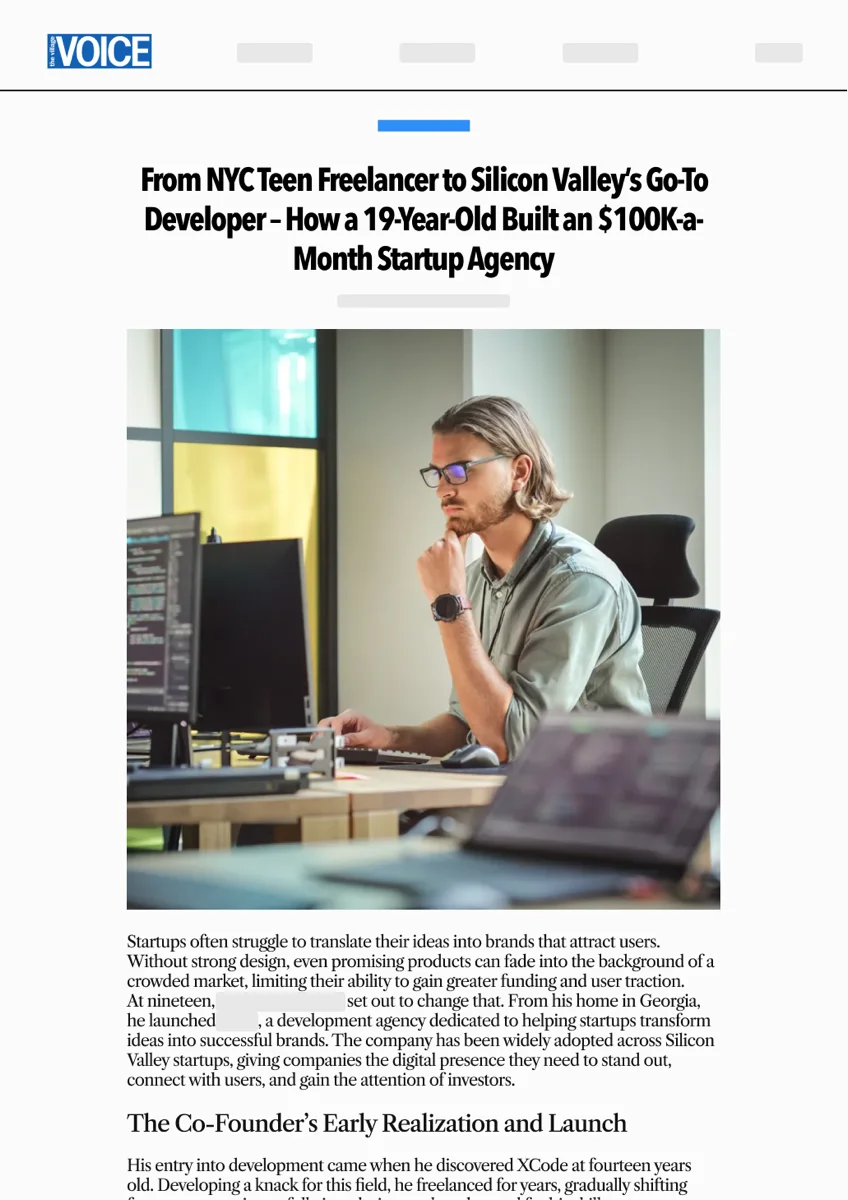

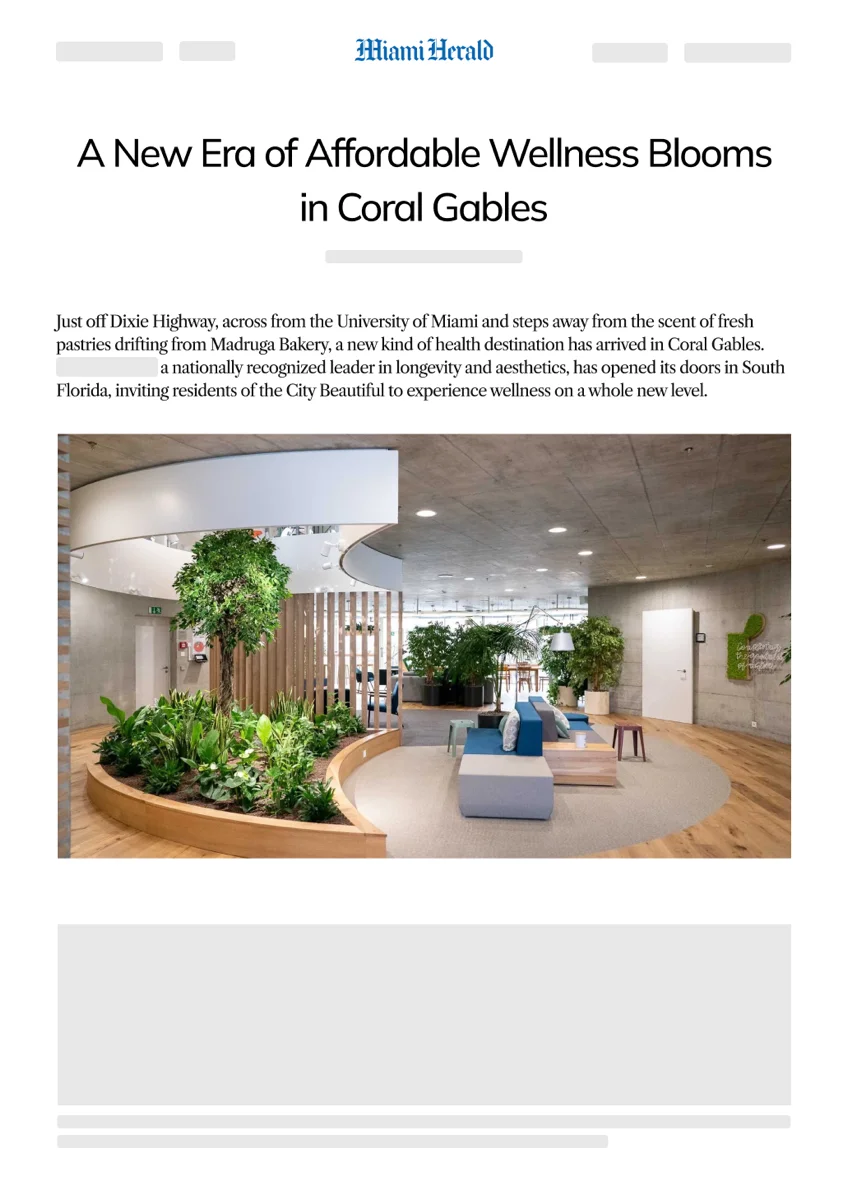

Citation-grade placements

1,500+ publishers — including local newsrooms AI models cite as legitimate sources, not low-DA aggregators that get filtered out. Every article ships with answer-first structure, claim-level entity context, and FAQ schema so the facts are easy to extract and quote.

A compounding asset, not a one-off hit

Each placement keeps citing you across model retraining cycles. Unlike paid ads that vanish when the budget stops, a single AEO-grade story keeps surfacing in AI answers for months — turning a one-time spend into a category-positioning compound.

How AEO works with Presscart + Lectern

Scan visibility and sentiment

Lectern runs your target prompts plus the sub-queries each model fans out to — sampled 7-10 times to cut through variance. You get a baseline of where you appear, where competitors win, and how AI describes you when it does mention you.

Place citation-grade press

Pick from 1,500+ publishers with transparent line-item pricing. Our Editorial Studio writes answer-first articles structured for AI retrieval. Live URL on every placement, or full refund.

Measure citation lift

Lectern re-runs the same prompts at Day 30, 60, and 90. You see which placements moved which queries on which platforms — the data your CFO needs to keep funding press as a pipeline channel.

See where AI puts your brand today.

The free Lectern scan runs your target prompts plus the sub-queries each model fans out to. You'll see which queries cite you, which cite your competitors, how AI describes you when you do appear, and where the fastest wins are. Takes about 10 minutes to set up.

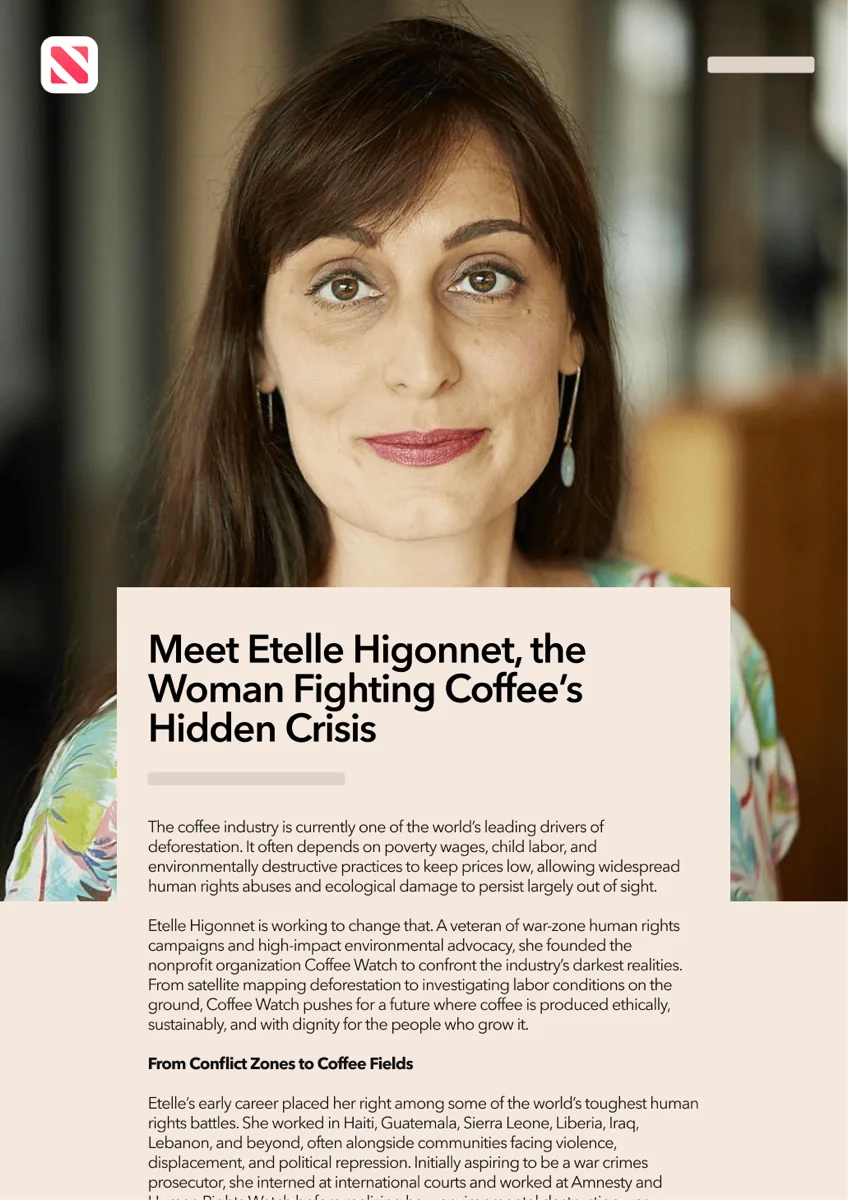

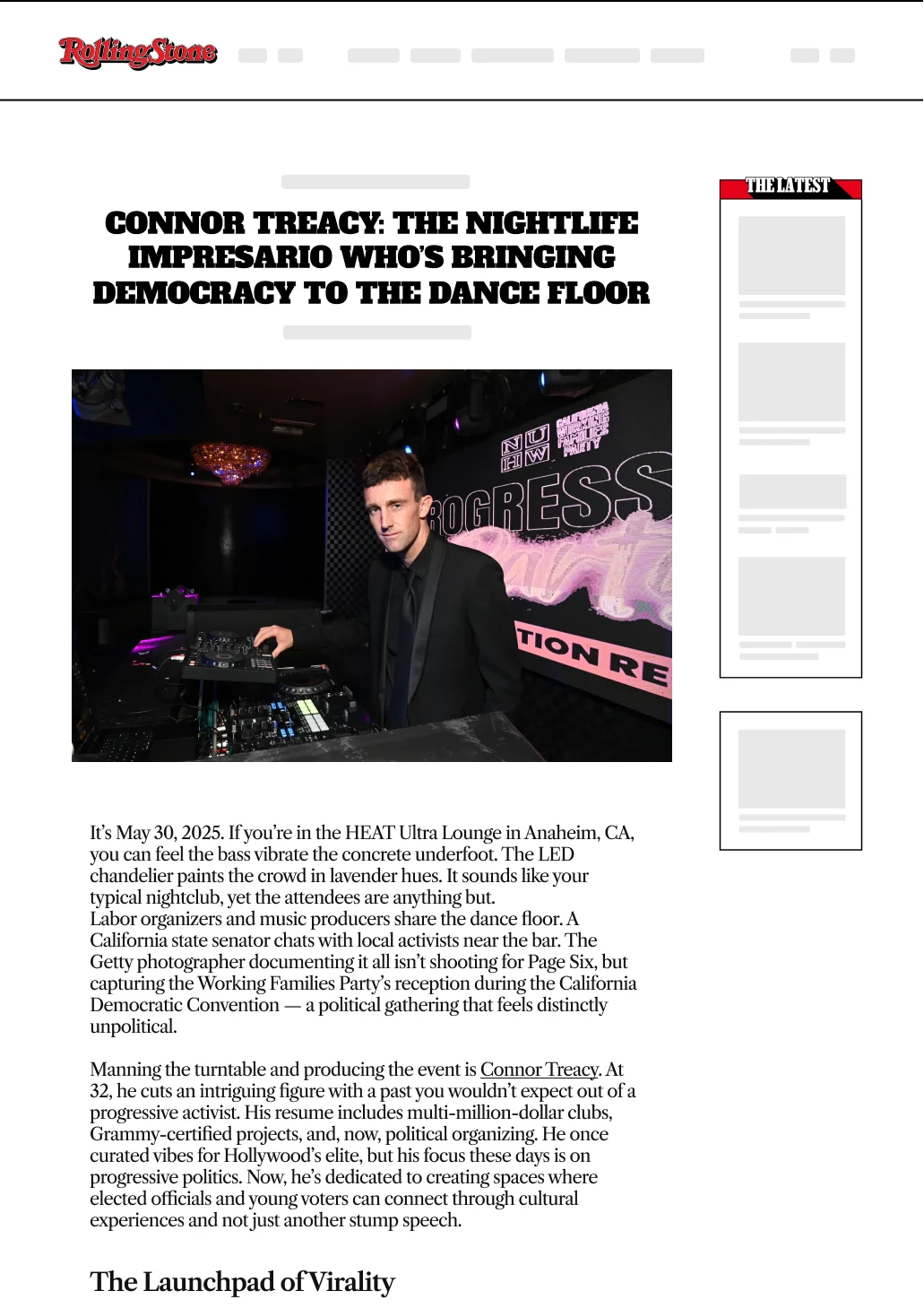

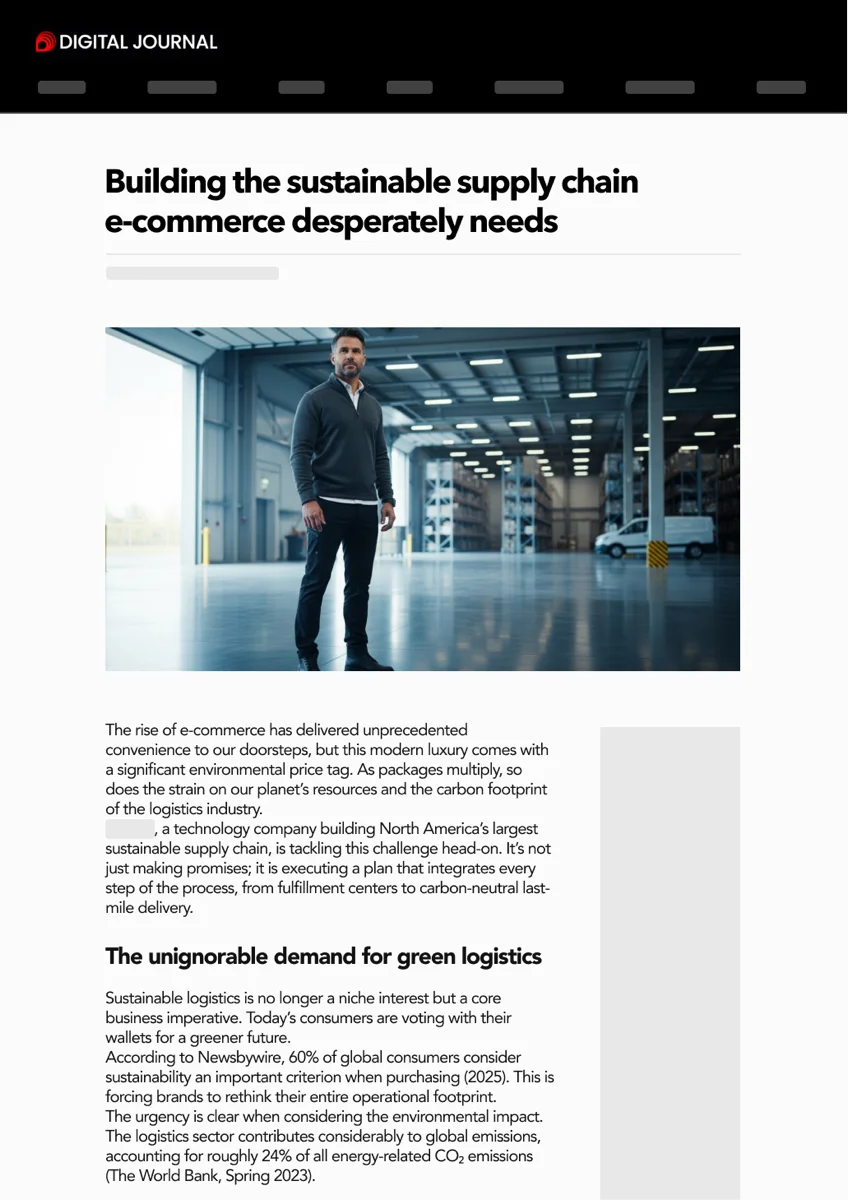

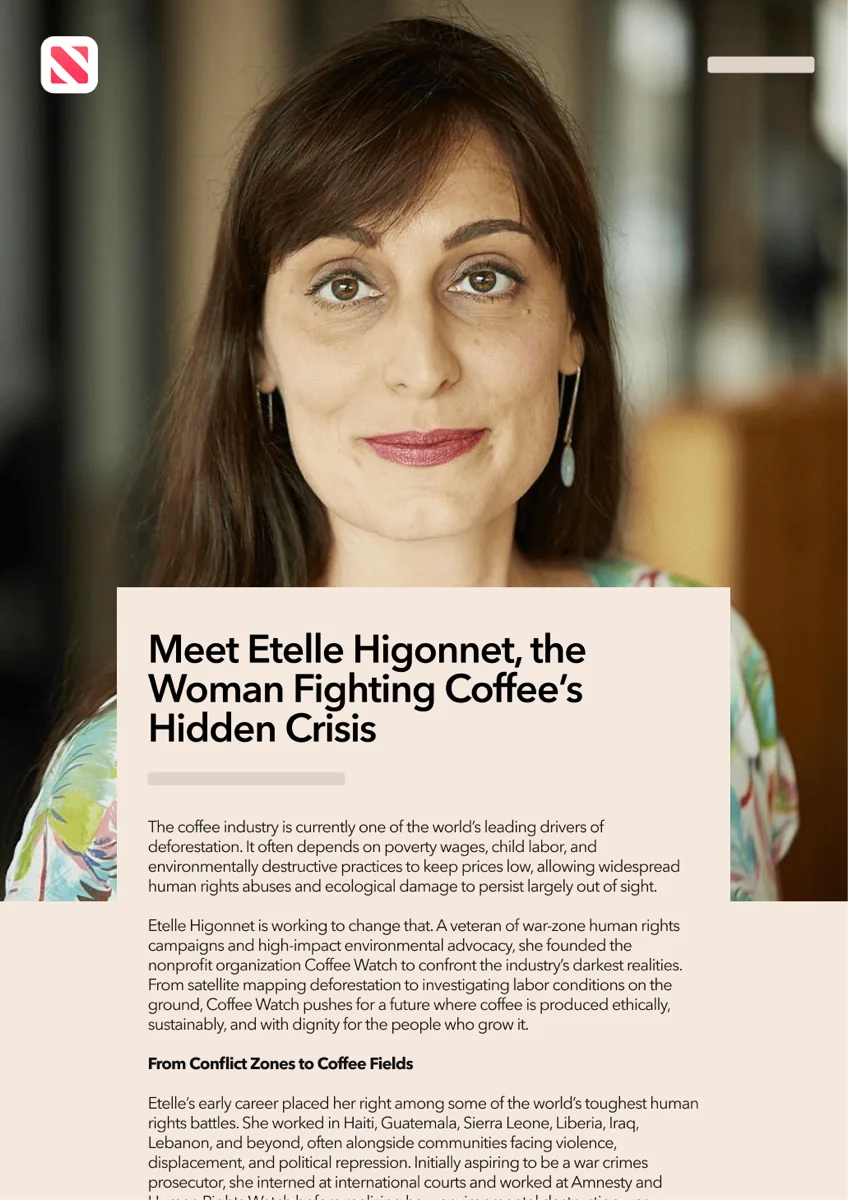

Placements AI models actually cite

Real articles, real publishers, real live URLs.

Frequently asked questions about AEO

The what, why, and how of Presscart.

Isn't AEO just SEO with a new name?

AEO is SEO plus three things: answer-ready content (clean blocks AI can extract), source management (cleaning up how you're described in third-party places already cited by AI), and compliant digital PR (independent sources where you can't win on your own domain). Google explicitly says no special schema or 'AEO file' is required to appear in AI Overviews or AI Mode — the fundamentals still matter, the surfaces are different.

What's the difference between a visibility gap and a sentiment gap?

A visibility gap means you're not being cited at all. A sentiment gap means you're being cited but described wrongly — 'enterprise-only' when you have a $100 plan, 'a competitor of X' when you're actually the recommended fit, or as the runner-up in your own category. Sentiment gaps are usually harder to fix and they're where the program spends meaningful effort.

How does query fan-out actually work?

Google has documented that AI Overviews and AI Mode may issue multiple related sub-searches across subtopics — and in AI Mode, a 'multitude' of queries simultaneously. So 'best CRM for startups' becomes 'CRM with free tier under 1000 contacts,' 'CRM with HubSpot import,' 'CRM for solo founders,' and so on. Microsoft exposes 'grounding queries' in Bing Webmaster Tools that show similar behavior. We map those branches per-prompt, identify which the brand can plausibly win, and target those.

Can you guarantee I'll show up in ChatGPT?

No, and you should be skeptical of anyone who does. AI retrieval is non-deterministic, varies by user context, and changes as models update. What we can commit to: tracking citation share, cited pages, grounding-query coverage, AI-referral sessions (via utm_source=chatgpt.com and Bing's AI Performance dashboard), and conversions — and reporting trajectory monthly.

Why a 4-month minimum?

Because that's how the systems work. Google's docs note that recrawling and reprocessing structured-data or content changes can take days and sometimes longer. Bing says reflected changes can take seconds to a week. OpenAI takes about 24 hours to respond to robots.txt updates. Once content is published, citations build over weeks as models re-retrieve. A multi-month engagement is operational reality, not a sales gimmick.

Are your PR placements compliant with Google and the FTC?

Yes. Paid editorial uses rel="sponsored" or rel="nofollow" where required by the publisher and Google's policies. We disclose material connections per FTC endorsement guidelines. We don't do site-reputation-abuse-style placements — irrelevant white-label pages dropped on authority domains purely to borrow ranking signals. That's exactly what Google's site-reputation-abuse policy is designed to catch.

Will you edit my Wikipedia page or pay for fake reviews?

No. Wikipedia notability requires significant coverage in independent reliable sources, and conflict-of-interest rules discourage paid editing. The right path is to earn coverage first, then request factual corrections transparently where allowed. Compensated reviews on community platforms like Reddit also fall under FTC disclosure rules. We work the durable way: real coverage, real evidence, real corrections.

How do you actually measure progress?

On Google: AI Overview clicks and impressions inside Search Console, plus AI Mode follow-up queries treated as new queries. On Bing: total citations, cited pages, grounding queries, and visibility trends from the AI Performance dashboard. On OpenAI: ChatGPT search referrals tracked via utm_source=chatgpt.com. In GA4: engaged sessions and conversions from those referrals. The KPI stack: citation share, cited pages, fan-out coverage, qualified AI referral sessions, conversions.

What's actually delivered each month?

Discovery (ICPs, buyer-journey prompt mapping, source-control matrix) up front. Then per month: 8 answer-ready articles on your site (typically 2/week), source-update sprints across the third-party surfaces already being cited (business profiles, retailer/marketplace listings, app-store/software-directory pages), a community-source plan where relevant (Reddit, forums, niche communities — done compliantly), and a monthly citation report. Strategic PR runs as a priced add-on against the highest-value fan-out branches.

Who is this not for?

Three groups: (1) Teams under ~$5K/mo that can't fund both content and source/PR work simultaneously — the program won't have enough surface area to move metrics. (2) Companies that need a guaranteed mention in under 30 days — that's a different motion. (3) Large teams that want to debate every change. The program works best when one operator can approve work and stay out of the way.

What about the Search Console impression-data caveat?

Worth knowing: Google confirmed in April 2026 that a Search Console logging error affected impression, CTR, and average-position data from May 13, 2025 through April 27, 2026. Clicks were not affected. Reports we share in that window lean on clicks, conversions, Bing AI citations, and downstream engagement instead of leaning hard on impression trends. We annotate the affected range when it shows up.

Be the brand AI cites.

Start with a free Lectern scan to see where you stand across ChatGPT, Perplexity, Google AI Overviews, and Gemini. Then place citation-grade press that compounds over every model retraining cycle.